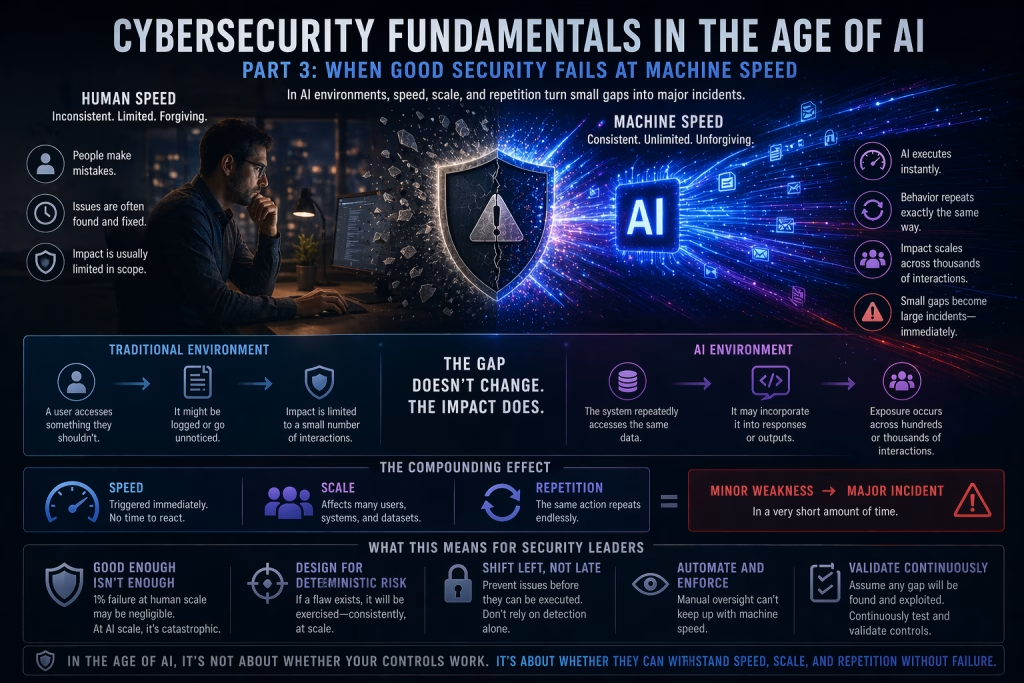

When Good Security Fails at Machine Speed

In cybersecurity, most organizations don’t fail because they lack controls.

They fail because those controls were never designed to operate under extreme scale, speed, and repetition.

In a human-driven environment, “good enough” security can often hold.

In an AI-driven environment, it breaks.

The Hidden Assumption Behind “Good Security”

Most security programs are built with an implicit assumption:

Errors will happen, but they will be limited.

A misconfiguration might exist for weeks before it’s noticed.

A user might make a mistake once, then learn from it.

A gap in monitoring might go undetected until a specific event triggers attention.

These assumptions have historically been manageable because:

- Human actions are inconsistent

- Activity happens at a relatively controlled pace

- Errors don’t typically repeat at scale

This creates a buffer, a margin for error.

AI removes that buffer.

Speed Changes Everything

AI systems operate at a fundamentally different speed than humans.

They can:

- Process thousands of requests per second

- Generate responses instantly

- Interact with multiple systems simultaneously

- Execute actions continuously without pause

That speed transforms small issues into immediate risks.

A control that works “most of the time” is no longer sufficient.

Because AI doesn’t wait for failure to be discovered.

It executes through it.

Scale Turns Gaps into Incidents

Consider a simple example:

A permission setting is slightly too broad.

In a traditional environment:

- A user might access something they shouldn’t

- The issue might be logged or go unnoticed

- The impact is limited to a small number of interactions

In an AI environment:

- The system may repeatedly access that same data

- It may incorporate it into responses or outputs

- The exposure can occur across hundreds or thousands of interactions

What was once a minor misconfiguration becomes a large-scale data exposure event.

The gap didn’t change.

The impact did.

Repetition Eliminates Randomness

Human-driven systems have randomness built in.

Not every user makes the same mistake.

Not every action follows the same path.

Not every vulnerability is exercised consistently.

AI removes that randomness.

If a condition exists, the system will:

- Encounter it repeatedly

- Execute the same behavior consistently

- Produce the same outcome every time

This creates a dangerous dynamic:

Failures are no longer occasional, they are deterministic.

And deterministic failures are far easier to exploit.

The Compounding Effect

Speed, scale, and repetition don’t operate independently.

They compound.

A small issue becomes:

- Fast → triggered immediately

- Scaled → impacts many interactions

- Repeated → persists until explicitly fixed

This creates a multiplier effect where:

Minor weaknesses become major incidents in a very short amount of time.

Why “Mostly Secure” Is No Longer Secure

In traditional environments, organizations could tolerate some level of imperfection.

Controls didn’t need to be flawless; they needed to be effective.

In AI environments, that tolerance disappears.

Because:

- A 1% failure rate at human scale might be negligible

- A 1% failure rate at AI scale can be catastrophic

If an AI system processes 10,000 interactions:

- 1% failure = 100 incidents

And those incidents are not random.

They are consistent, repeatable, and often identical.

Real-World Implications

This shift is already visible in how AI systems are being used and misused:

- Generative AI tools unintentionally exposing sensitive data through prompts

- Automated systems accessing data beyond intended boundaries

- AI-driven processes amplifying misconfigurations in access control

- Attackers exploiting repeatable behaviors through prompt manipulation

These are not entirely new types of vulnerabilities.

They are existing weaknesses operating at a new scale.

Rethinking Control Design

Security controls must now be evaluated differently.

It’s no longer enough to ask:

“Does this control work?”

The better question is:

“Does this control fail safely under continuous, high-speed execution?”

Controls must be:

- Precise

- Enforced consistently

- Designed to handle repetition without degradation

- Capable of operating at machine speed

This requires a shift from:

- Reactive controls → Preventative controls

- Manual oversight → Automated enforcement

- Static configurations → Continuous validation

The End of Margin for Error

AI doesn’t introduce chaos.

It introduces precision at scale.

And that precision exposes any weakness that exists.

The margin for error that organizations relied on in human-driven systems is gone.

What remains is a binary reality:

- Controls either hold under pressure

- Or they fail, quickly, repeatedly, and visibly

Looking Ahead

If Part 2 highlighted the shift from human inconsistency to machine consistency, Part 3 reveals the consequence:

Consistency + speed + scale turns small weaknesses into major risks.

So how do organizations respond?

The answer lies in strengthening the controls that matter most.

In the next part of this series, we’ll focus on where that effort should be concentrated:

Part 4: Why Identity and Data Become Non-Negotiable

Because in an AI-driven world, controlling who has access and what data is exposed becomes the difference between manageable risk and systemic failure.

William Tulaba is a cybersecurity executive and security engineering leader focused on enterprise security strategy, cloud risk, and security operations.