Part 1: The Illusion That AI Changes Everything

Artificial Intelligence is dominating every technology conversation right now.

Organizations are racing to adopt it. Vendors are embedding it into their platforms. Security teams are being asked, often urgently, how to secure it.

And in the middle of all of this, a common narrative has emerged:

“AI changes everything.”

It’s a compelling idea.

It’s also incomplete, and potentially dangerous.

The Pattern We’ve Seen Before

This isn’t the first time cybersecurity has faced a major technological shift.

We’ve seen this pattern play out before:

- The rise of the internet

- The shift to cloud computing

- The explosion of mobile devices

- The adoption of SaaS platforms

Each of these moments felt disruptive. Each introduced new risks. Each forced organizations to adapt.

And each time, the same conclusion emerged:

The fundamentals of cybersecurity didn’t change.

Identity still mattered.

Access control still mattered.

Data protection still mattered.

Monitoring and response still mattered.

What changed was the environment in which those fundamentals had to operate.

AI Doesn’t Replace the Fundamentals

Artificial Intelligence is no different in that regard.

AI systems still rely on:

- Access to data

- Defined permissions

- Underlying infrastructure

- External integrations

They are still subject to the same core risks:

- Unauthorized access

- Data exposure

- Misconfiguration

- Lack of visibility

- Weak monitoring and response

The idea that AI requires an entirely new cybersecurity foundation misses a critical point:

The foundation already exists.

What AI Actually Changes

If AI doesn’t replace cybersecurity fundamentals, what does it change?

The answer is simple:

It changes the consequences of getting them wrong.

Unlike traditional systems, and unlike people, AI does not rely on judgment, experience, or intuition.

It operates on:

- Inputs

- Training data

- Mathematical models

- Programmed constraints

It does not pause.

It does not question intent.

It does not recognize risk unless explicitly designed to do so.

It simply executes.

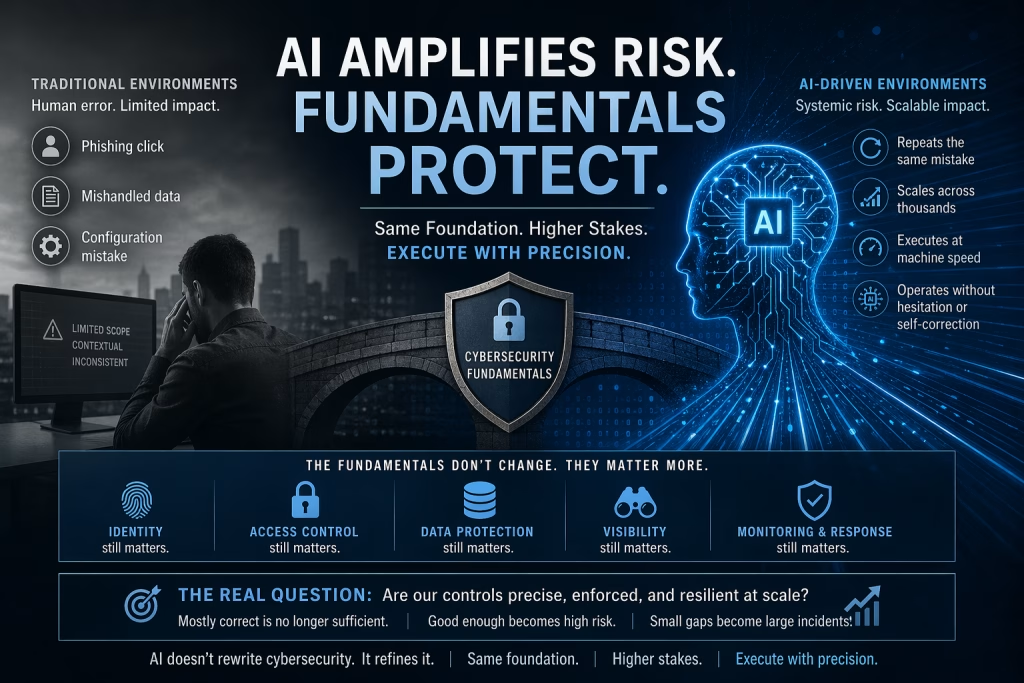

From Human Error to Systemic Impact

In traditional environments, many security failures are tied to human behavior.

- An employee clicks a phishing link

- A user mishandles sensitive data

- An administrator makes a configuration mistake

These events are often:

- Contextual

- Inconsistent

- Limited in scope

They can be serious, but they are usually contained.

AI changes this dynamic completely.

When an AI system behaves incorrectly, it can:

- Repeat the same mistake consistently

- Scale the impact across thousands of interactions

- Execute actions at machine speed

- Operate without hesitation or self-correction

This is no longer isolated human error.

This is systemic, repeatable, and scalable risk.

The Real Risk: False Confidence

One of the biggest risks organizations face with AI is not the technology itself.

It’s the belief that existing controls are “good enough.”

A slightly misconfigured access policy

A loosely defined data boundary

A monitoring gap that hasn’t caused issues, yet

In a human-driven system, these gaps might go unnoticed or have limited impact.

In an AI-driven system, those same gaps can be:

- Exploited instantly

- Repeated continuously

- Amplified across the organization

AI doesn’t introduce entirely new categories of failure.

It exposes and accelerates, the ones that already exist.

Why This Matters for Security Leaders

For security leaders, this shift requires a change in mindset.

The question is no longer:

“Do we have the right controls in place?”

The question becomes:

“Are our controls precise, enforced, and resilient at scale?”

Because in an AI-driven environment:

- “Mostly correct” is no longer sufficient

- “Good enough” becomes high risk

- Small gaps become large incidents

The margin for error disappears.

A More Disciplined Approach to Security

The organizations that succeed in the age of AI will not be the ones that abandon cybersecurity fundamentals.

They will be the ones that:

- Apply them consistently

- Enforce them rigorously

- Validate them continuously

They will treat identity, data protection, monitoring, and access control not as baseline requirements, but as critical control points that must operate flawlessly.

Looking Ahead

Artificial Intelligence is not rewriting cybersecurity.

It is refining it.

It is forcing organizations to confront how well they have actually implemented the controls they have relied on for years.

In this series, we will explore how AI changes the nature of risk, not by replacing the fundamentals, but by amplifying them.

Because in the age of AI, cybersecurity isn’t about reinventing what works.

It’s about executing it with precision.

William Tulaba is a cybersecurity executive and security engineering leader focused on enterprise security strategy, cloud risk, and security operations.