Humans vs. Machines — A Fundamental Shift in Risk

In cybersecurity, we’ve always designed controls around one central reality:

People make mistakes.

Security awareness programs, phishing simulations, access reviews, and approval workflows all exist because human behavior is inherently inconsistent. People hesitate. They question. They make judgment calls. Sometimes they get it wrong, but just as often, they catch something that doesn’t feel right.

For decades, cybersecurity has been built around managing that unpredictability.

Artificial Intelligence changes that equation entirely.

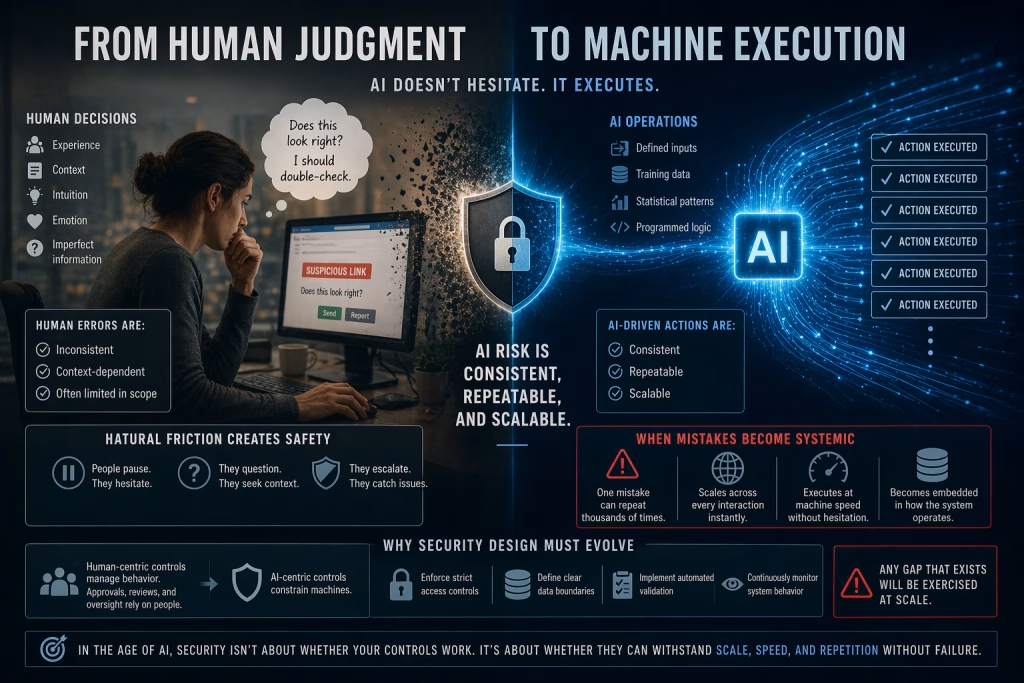

From Human Judgment to Machine Execution

Humans make decisions based on:

- Experience

- Context

- Intuition

- Emotion

- Imperfect information

That makes human behavior messy, but it also creates natural friction.

A person might pause before clicking a suspicious link.

They might question an unusual request.

They might escalate something that doesn’t seem right.

AI does none of that.

AI systems operate on:

- Defined inputs

- Training data

- Statistical patterns

- Programmed logic

They don’t hesitate.

They don’t question intent.

They don’t apply judgment beyond what they’ve been trained to recognize.

They execute.

The Predictability Problem

At first glance, predictability might sound like a benefit.

In reality, it introduces a new kind of risk.

Human errors are:

- Inconsistent

- Context-dependent

- Often limited in scope

AI-driven actions are:

- Consistent

- Repeatable

- Scalable

If a human makes a mistake, it might happen once.

If an AI system makes a mistake, it can happen thousands of times in exactly the same way.

That consistency is what makes AI risk fundamentally different.

When Mistakes Become Systemic

Consider a traditional security scenario:

An employee accidentally shares sensitive data.

- The impact is limited to that instance

- The issue can be contained and remediated

- The organization learns and adjusts

Now consider the same scenario in an AI context:

An AI system is exposed to sensitive data through prompts or training inputs.

- The behavior can repeat across every interaction

- Sensitive data may be exposed multiple times

- The issue scales instantly without additional user action

This is no longer an isolated event.

It becomes a systemic issue embedded in how the system operates.

The Absence of “Common Sense”

One of the most overlooked aspects of AI risk is the absence of human intuition.

Humans apply informal checks constantly:

- “This doesn’t look or feel right.”

- “This request seems unusual.”

- “I should double-check this.”

AI systems do not have that layer of reasoning unless it is explicitly engineered.

They do not understand:

- Business context

- Intent behind a request

- The difference between “allowed” and “appropriate”

They only understand what they’ve been trained or instructed to do.

This creates a gap where:

Technically valid actions can still be operationally or security-wise dangerous.

Why This Changes Security Design

When systems shift from human-driven to machine-driven decision-making, security design must evolve.

Traditional controls that rely on human judgment, such as approvals, reviews, or manual oversight, become less effective.

Instead, organizations must:

- Enforce stricter access controls

- Define clearer data boundaries

- Implement automated validation mechanisms

- Continuously monitor system behavior

Security must move from guiding human behavior to constraining machine behavior.

Designing for Deterministic Risk

AI introduces what can be described as deterministic risk.

The system will behave in a predictable way based on its inputs and design.

That means:

- If there is a flaw, it will be repeated

- If there is a gap, it will be exploited consistently

- If there is a weakness, it will scale

This predictability is not inherently negative, but it requires precision.

Security teams must assume:

Any gap that exists will eventually be exercised at scale.

The New Role of Security Controls

In a human-centric environment, controls are often designed to:

- Reduce likelihood of mistakes

- Detect anomalies after the fact

- Provide guardrails around behavior

In an AI-centric environment, controls must:

- Prevent unsafe actions entirely

- Validate inputs and outputs continuously

- Enforce strict boundaries on what systems can access and do

There is less room for “best effort” controls.

The system must be designed to operate safely by default.

Bridging to What Comes Next

This shift, from human inconsistency to machine consistency, introduces a new challenge:

Even small weaknesses become high-impact risks when executed at machine speed.

In the next part of this series, we’ll explore how this plays out in real environments:

Part 3: When Good Security Fails at Machine Speed

Because in the age of AI, it’s not just about whether your controls work.

It’s about whether they can withstand scale, speed, and repetition without failure.

William Tulaba is a cybersecurity executive and security engineering leader focused on enterprise security strategy, cloud risk, and security operations.